Tesla Autopilot Is Not Autonomous, Semi-Autonomous or Self-Driving

2019-10-02

(this post originally appeared on Why Is This Interesting, the newsletter started by Noah Brier and Colin Nagy)

Recently, the National Transportation Safety Board (NTSB) released its report on a 2018 vehicle crash whereby a Tesla driver rammed into the back of a parked fire truck. The report suggests the driver was using the car’s advanced driver assistance system, aka Autopilot, at the point of collision. Like most crashes involving this system, it’s quite difficult to know how much the driver was paying attention to the road (if at all) at the point of impact. This is because Tesla vehicles do not currently use an interior camera to monitor the driver’s state of awareness.

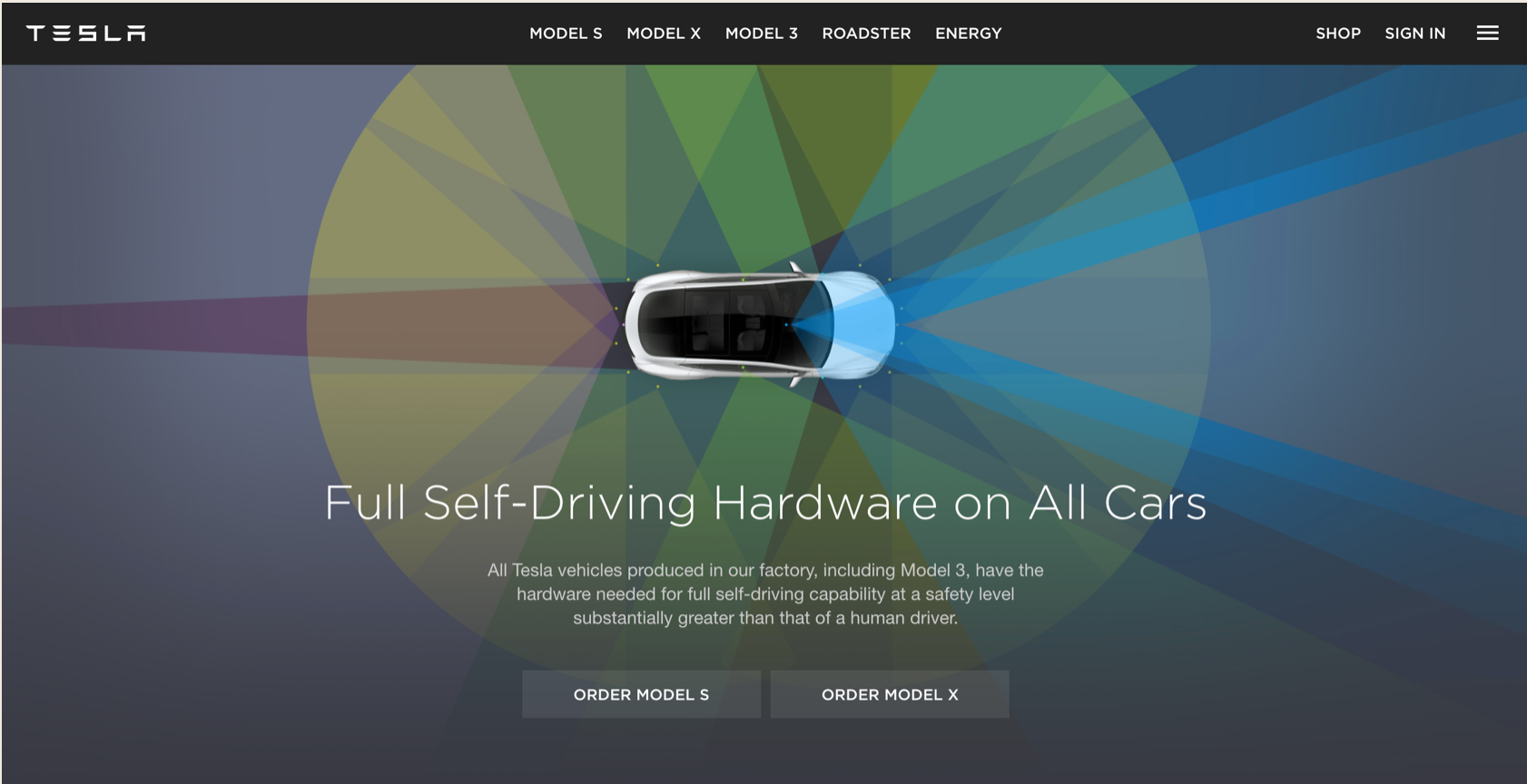

Tesla’s Autopilot is not a self-driving or autonomous vehicle system, but drivers of those vehicles could be forgiven for believing that’s what they’re getting. Note the language used to describe the equipment on Tesla’s cars (see image below, taken from the company’s website earlier in the year). Thankfully, the driver in this 2018 crash was not fatally injured. Afterward, he told the NTSB in his interview “that the name Autopilot did not accurately describe the technology because the car did not fully drive itself.”

Why is this interesting?

Systems like Autopilot can significantly reduce driver fatigue and curtail fender benders, but only if the driver pays attention 100% of the time. They are designed as assistive technologies, not intended to be a primary method of control. Advertising legend David Ogilvy once described his company’s misuse of research “like a drunkard using a lamppost—for support, but not for illumination.” The same could be said today for advanced driver assistance systems. We’re probably using these technologies the wrong way and I believe a significant contributor to that problem is the branding and marketing of these systems.

Although Autopilot may have serious consequences for the driver, passengers, and those in the vicinity, car manufacturers have nearly free rein when it comes to labeling those systems. Contrast that with the USDA’s food labeling standards, which are regulated with the seriousness of an application, approval, and review process. Specific labels are given tight boundary controls (“Arroz con pollo must contain at least 15% cooked chicken meat”). But if you want to brand your car’s systems as Auto-magic-pilot-drive-yourself, there is little today that the US Department of Transportation or Federal Trade Commission will do to prevent you.

If food labeling is important enough for such scrutiny, vehicle systems should be given equal attention. Errors caused by mislabeling in the food category tend to be limited to an individual consumer. Driving motor vehicles, on the other hand, can have a very large amplification effect for non-consenting pedestrians, bicyclists, and other drivers.

Language drives expectations, which establishes our relationship with technology. In using terms like Autopilot (Tesla), ProPILOT (Nissan), and Pilot Assist (Volvo), research shows that 40% of survey respondents believe those systems “drive themselves.” In order for a new wave of vehicle technologies to show their real safety benefits, we must begin by calling them by their right name. Or at least not calling them by the wrong one.